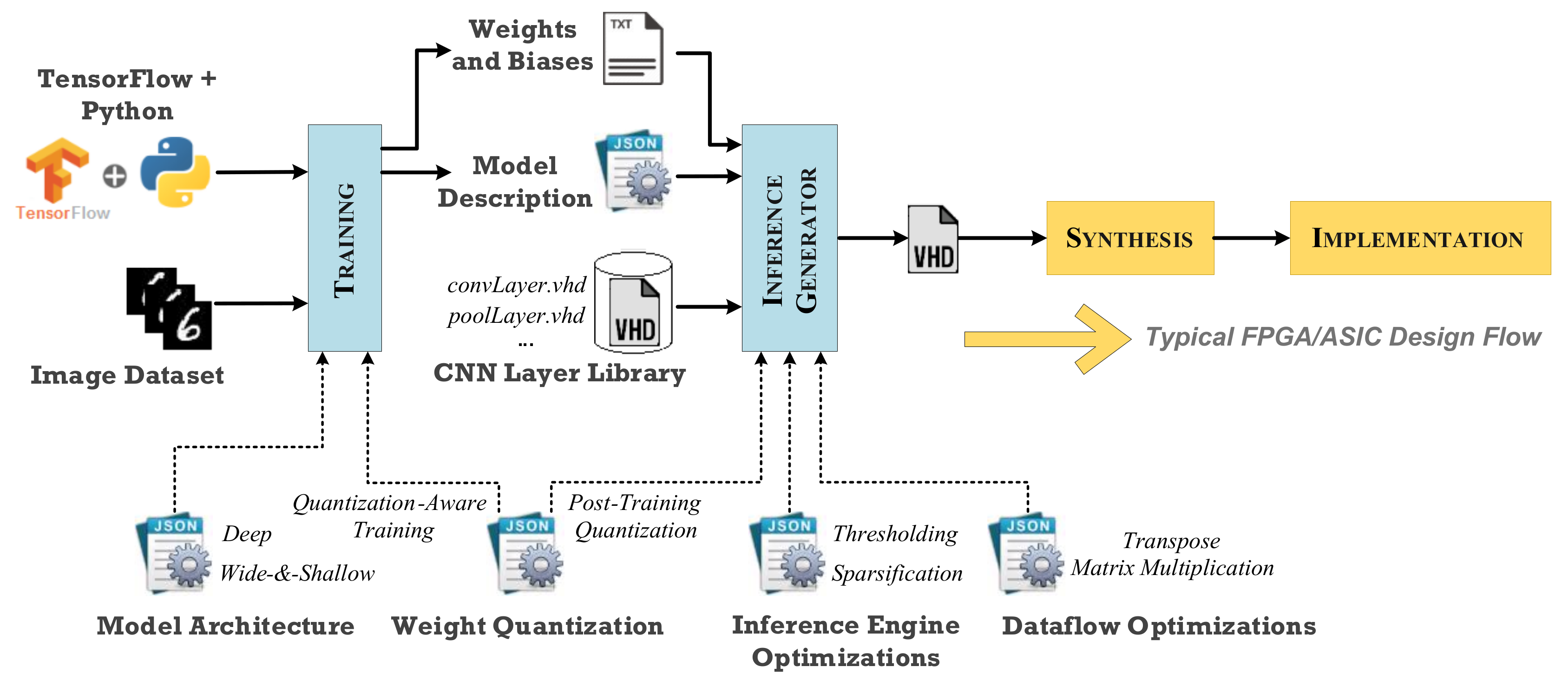

Technologies | Free Full-Text | A TensorFlow Extension Framework for Optimized Generation of Hardware CNN Inference Engines

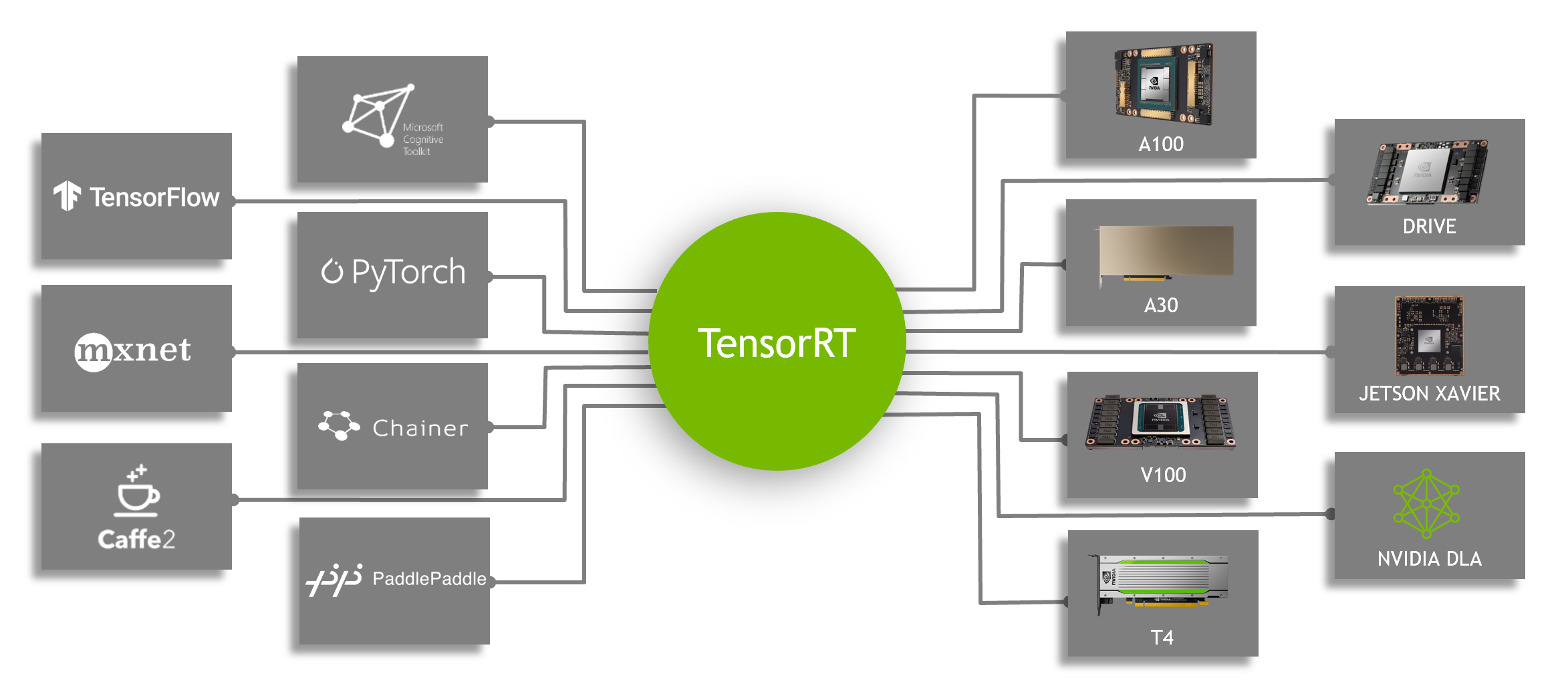

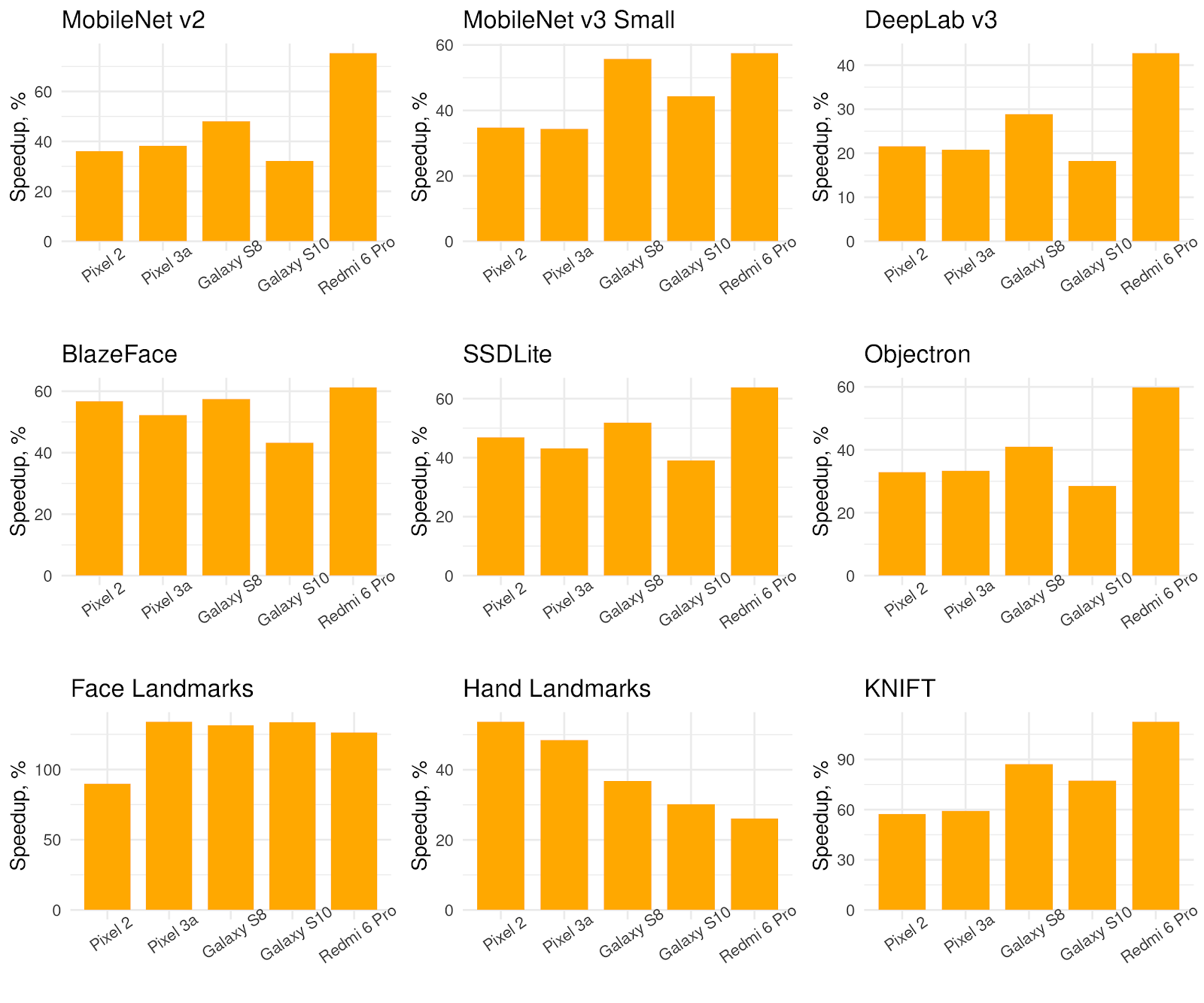

Speeding Up Deep Learning Inference Using TensorFlow, ONNX, and NVIDIA TensorRT | NVIDIA Technical Blog

GitHub - dailystudio/tflite-run-inference-with-metadata: This repostiory illustrates three approches of using TensorFlow Lite models with metadata on Android platforms.

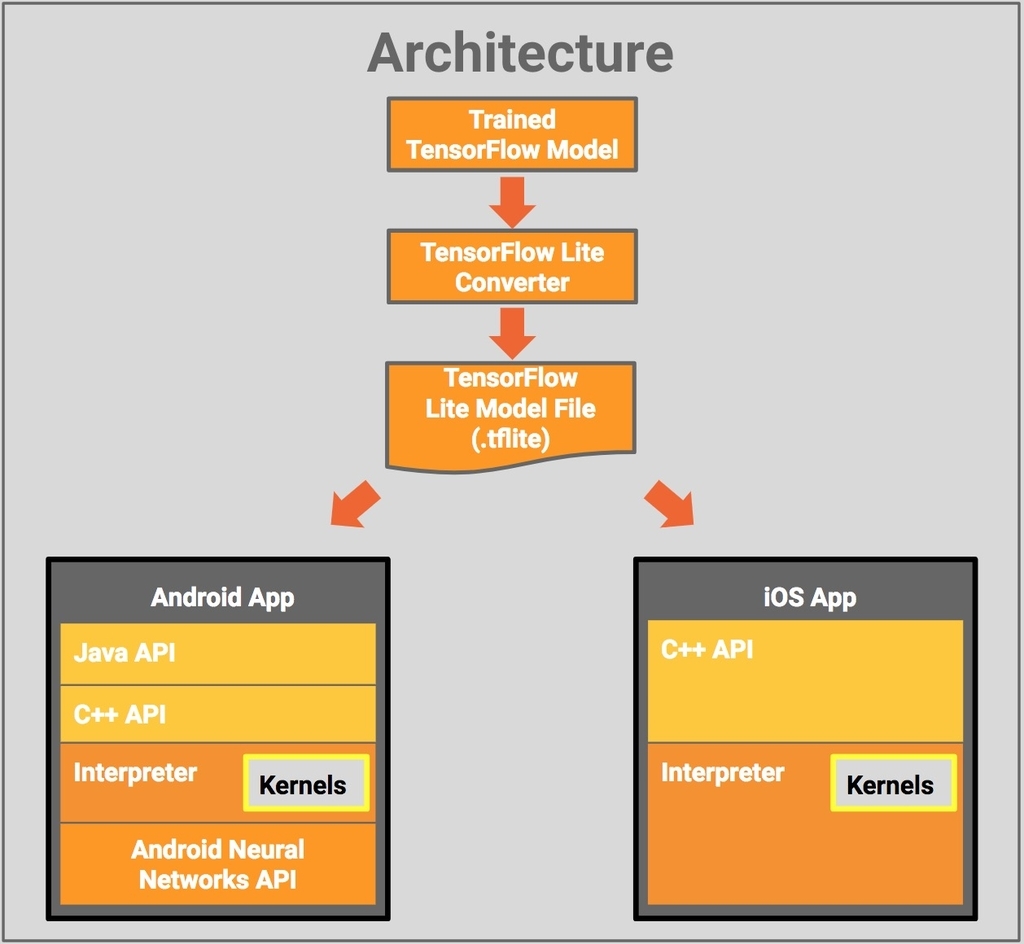

/filters:no_upscale()/news/2019/11/tensorflow-lite-edge-qconsf/en/resources/1Tensorflow%20lite%201-1574277892121.png)